How judgment and automation work best together

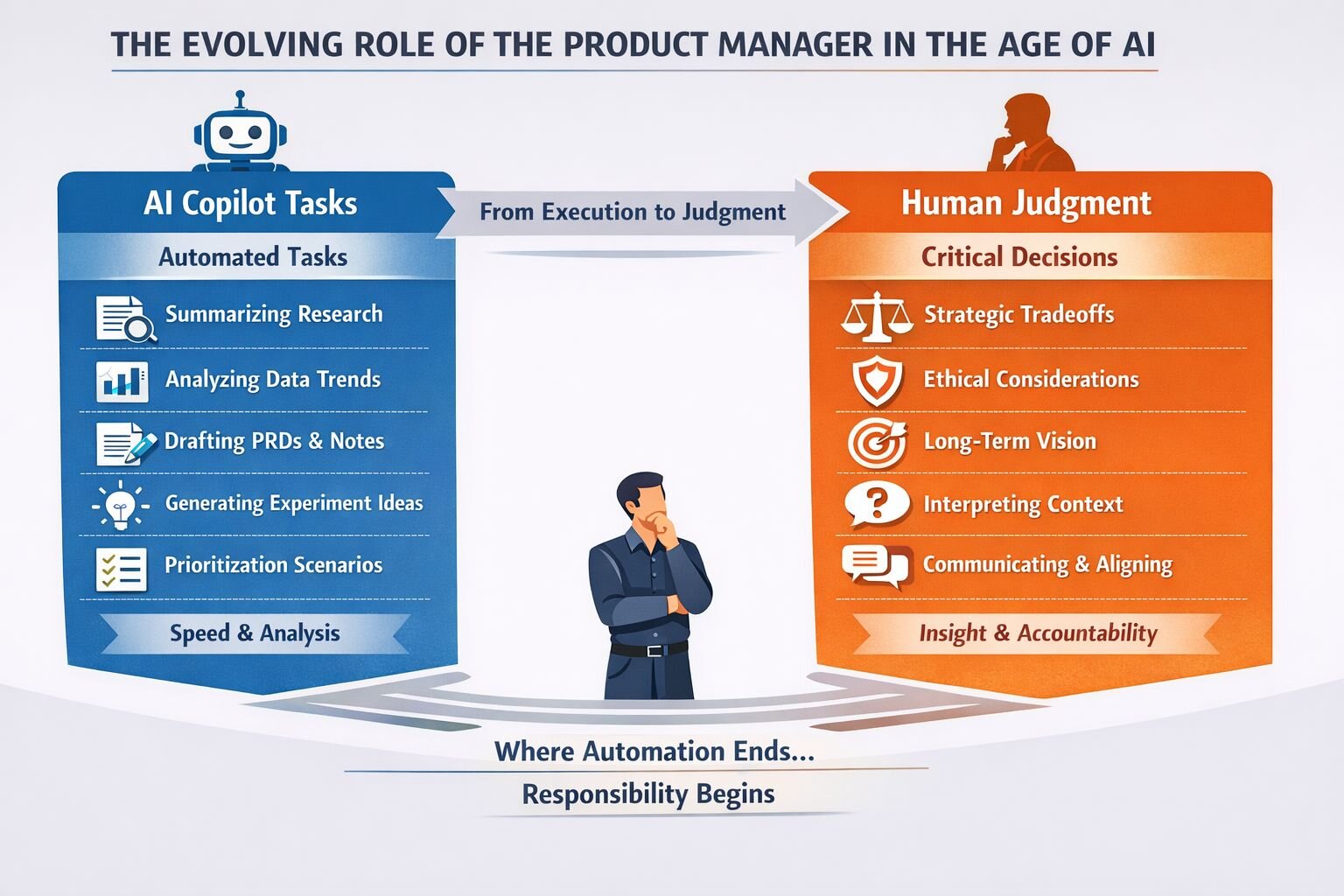

AI copilots are quickly becoming part of everyday product work. They help product managers summarise research, draft PRDs, analyse metrics, and move faster through tasks that once consumed hours of focused effort. For many teams, these tools already feel indispensable. As they improve, it is natural to ask how far their role might extend, and what that means for product judgment.

The short answer is that AI copilots are powerful accelerators, but they are not decision makers. As copilots become more capable, product judgment becomes more important.

What AI copilots change and what they don’t

Copilots reduce friction. They handle repetitive work, surface relevant information, and move teams faster from question to analysis. Product organisations already use AI assistants to synthesise user interviews, draft positioning documents, analyse usage data, and generate early solution concepts. The productivity gains are real.

This reclaimed time shifts where product effort goes. Instead of preparing decks or consolidating notes, product managers can spend more time reviewing assumptions, pressure-testing insights with customers, and thinking through second-order effects. This is where copilots deliver their greatest value: creating space for better thinking, not replacing it.

What copilots do not change is the nature of product decisions. Product choices involve tradeoffs, incomplete information, organisational constraints, and long-term consequences. AI copilots work on patterns and probabilities, but they do not understand intent. They can surface options, but they cannot decide which option best aligns with strategy, customer trust, or long-term impact.

Why product judgment still matters

Product judgment is often mistaken for intuition, but it is a learned and disciplined skill. It involves framing the right problem, deciding which signals matter, and balancing user needs, business goals, and technical realities. Most importantly, judgment carries accountability. Product managers own the outcomes of their decisions, including the risks and tradeoffs they accept.

As AI becomes more capable, this responsibility does not diminish. It becomes clearer. When a copilot generates a recommendation, a human still decides whether to act on it. When AI accelerates experimentation, someone still determines which experiments are worth running and how success is measured. Judgment is not replaced by automation.

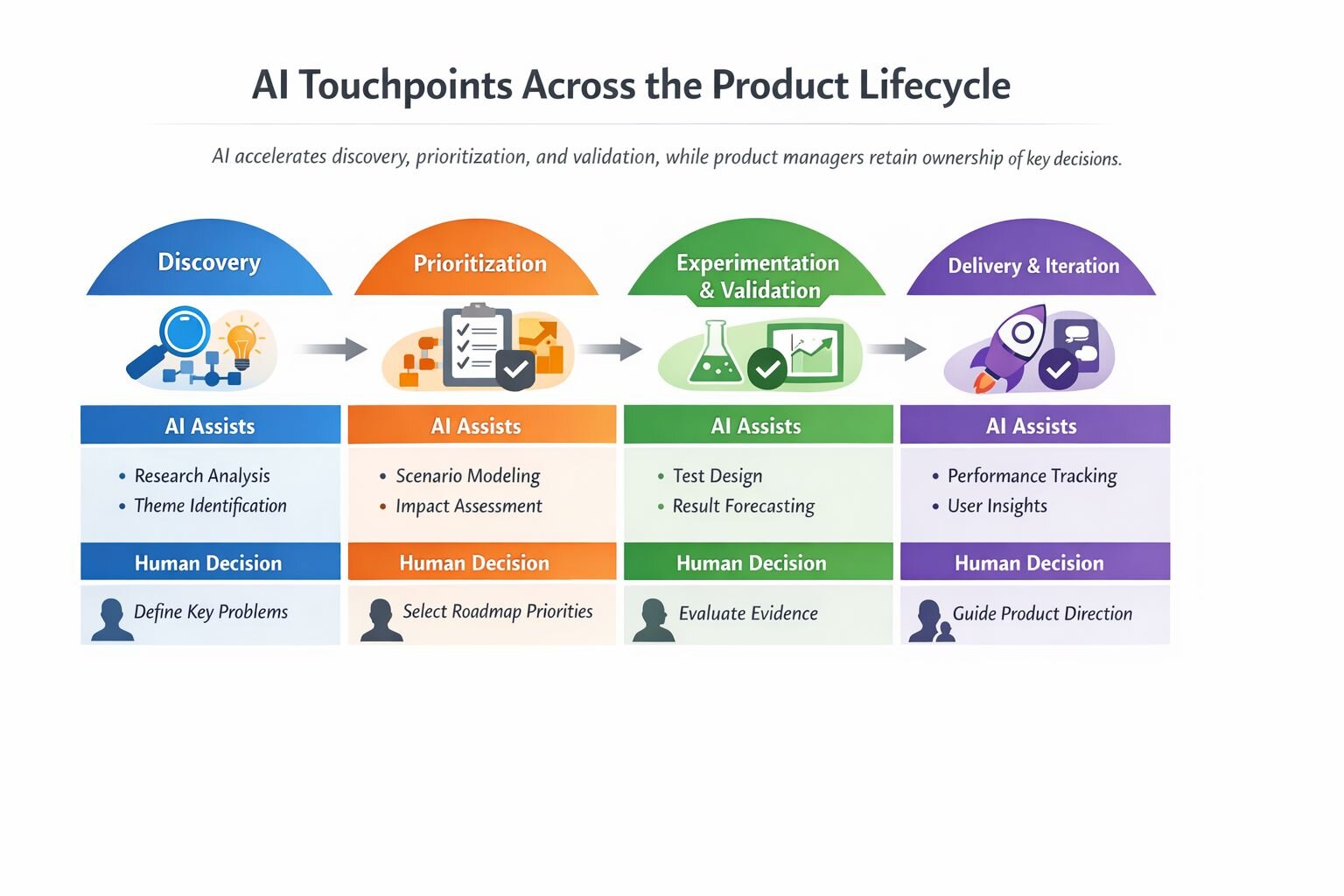

This shows up across the product lifecycle. Copilots can synthesise research, generate ideas, and model outcomes, but humans decide which problems are worth solving, which ideas align with strategy, and when evidence is sufficient to move forward. AI may shorten the loop, but people still determine its direction.

Practical ways product teams use AI copilots today

- Discovery and research synthesis.Teams use copilots to synthesise interview notes, support tickets, and survey responses to surface themes. Human judgment is still required to decide which patterns reflect real customer problems versus noise. The copilot reduces cognitive load; the product manager decides what matters.

- Prioritisation and roadmap discussions. AI is used to generate alternative prioritisation scenarios based on different weighting assumptions, such as revenue impact, customer reach, or operational cost. These scenarios make tradeoffs explicit but do not determine the roadmap. Final prioritisation decisions remain human-owned.

- Experiment design and validation. Copilots help propose experiment variants, estimate sample sizes, and identify potential confounding variables. Product teams still decide when results are strong enough to act on and when moving forward with incomplete data is acceptable.

- Explicit boundaries for AI use. Teams intentionally avoid using AI for high-impact decisions involving customer trust, policy changes, or long-term platform direction. This boundary-setting reflects mature product judgment, knowing not only what can be automated, but what should not be.

A practical lesson from using AI in product work

A product lead once walked me through a situation that stuck with me because it captured both the promise and the limits of AI copilots in real product work.

Ahead of a roadmap conversation, their team prepared a pre-read using a general-purpose AI copilot alongside the tools they already used day to day. They pulled engagement metrics from their analytics platform, exported recent feature-level usage trends, and added qualitative input from user interviews and support tickets that had been tagged and clustered in their research repository. All of that context was fed into the copilot with a simple goal: identify where the strongest signals for improvement appeared to be emerging.

Within minutes, the copilot returned a clear recommendation. One feature stood out. Users who engaged with it more frequently showed higher retention, and recent feedback clustered around requests to expand its functionality. The output was structured, statistically sound, and easy to follow. As the product lead described it to me, “If this had been a slide in a deck, no one would have questioned it.”

Going into the roadmap meeting, the recommendation felt obvious. The data aligned. The qualitative feedback reinforced it. It looked like exactly the kind of insight teams invest in analytics and research to uncover.

But as the discussion unfolded, the context shifted.

During the review, the engineering lead pointed out something the copilot could not see. The feature being recommended sat on top of a part of the platform that the team was actively trying to simplify. A broader refactor was already underway to reduce complexity in that area. Expanding the feature now would likely lift a short-term engagement metric, but it would also introduce new dependencies, slow the migration, and increase future maintenance costs.

None of that context had been included in the copilot’s inputs. It wasn’t captured in dashboards or research notes. It lived in architectural decisions, sequencing commitments, and shared understanding across product and engineering. The model had done exactly what it was asked to do, based on the data it was given.

What changed was the conversation. Because the analysis arrived quickly, the team didn’t spend the meeting debating whether the numbers were right. Instead, they focused on tradeoffs. They documented why they were choosing not to follow the most data-supported option and captured that reasoning alongside the roadmap decision.

In the end, they decided not to act on the recommendation. And yet, the product lead considered the exercise a success. The copilot had narrowed the field, surfaced the strongest candidate, and accelerated alignment.

This use case reflects what many product teams experience when AI is introduced thoughtfully into their workflow. Copilots are effective at synthesising inputs, surfacing patterns, and narrowing the field of options. They help teams move faster toward a decision. They are not equipped to decide when a data-backed recommendation is wrong for the moment. That responsibility still belongs to product leaders who understand context, constraints, and consequences.

Judgment in action

The distinction between automation and judgment becomes clearer when looking at organizations that have been intentional about AI adoption. Duolingo, for example, has spoken openly about using AI to scale content creation and improve internal workflows. At the same time, product leaders retained responsibility for quality, learning outcomes, and user trust. AI expanded what was possible, but judgment defined what was acceptable.

Less successful examples tend to follow a different pattern. Teams treat AI output as implicitly authoritative because it appears objective or data-driven. Over time, this erodes accountability. When decisions fail, it becomes unclear whether the issue was the model, the data, or the absence of human oversight. Strong product judgment prevents this diffusion of responsibility by keeping ownership explicit.

The evolving role of the product manager in the age of AI

Human judgment becomes essential once decisions involve tradeoffs, risk, or long-term impact. AI can surface patterns, model scenarios, and suggest options, but product managers decide what to prioritise, which signals matter, and which outcomes are acceptable, especially when metrics conflict with user trust, accessibility, or strategic direction.

Product managers decide how to interpret signals, when metrics are misleading, and when qualitative insight should outweigh quantitative data. These framing decisions shape everything that follows and cannot be delegated. They also act as interpreters and communicators. AI can generate analyses and recommendations, but humans are responsible for explaining decisions, aligning teams around tradeoffs, and maintaining trust with customers. In an AI-assisted organisation, execution becomes faster, but judgment becomes more visible and more consequential. AI reduces the cost of analysis while increasing the importance of deciding what to do with it.

AI Copilots in Practice: Tools, Prompts, and Guardrails in the Product Life Cycle:

Discovery and research synthesis

In discovery, teams use research repositories such as Dovetail, Condens, and Notably primarily to reduce synthesis time, not to replace insight generation. AI features are commonly used to cluster interview notes, tag recurring themes across large volumes of qualitative data, and surface patterns that might otherwise be missed when working under time constraints.

For example, teams often upload raw interview transcripts, support tickets, or open-text survey responses and ask the tool to group feedback by topic or sentiment. This allows product managers and researchers to quickly see where signals concentrate. Human judgment then determines which themes represent meaningful unmet needs, which are edge cases, and which reflect temporary noise. AI accelerates sensemaking, but problem definition remains human-owned.

Prioritisation and roadmap planning

In prioritisation, roadmap tools like Productboard, Aha!, and Jira Product Discovery are used to organise inputs and model tradeoffs, not to decide what gets built. Teams commonly use AI copilots to summarise large volumes of feature requests, cluster similar ideas, or generate alternative prioritisation scenarios based on different weighting assumptions.

For instance, a product manager might ask the copilot to model how priorities change if customer reach is weighted more heavily than revenue impact, or if operational cost is treated as a constraint. These scenarios help teams have clearer discussions with stakeholders, but they do not dictate outcomes. Because AI lacks awareness of long-term strategy, sequencing, and organisational commitments, final prioritisation decisions remain explicitly human-led.

Experimentation and validation

During experimentation and validation, analytics and testing platforms such as Amplitude, Mixpanel, and Optimizely are increasingly paired with AI to speed up analysis and learning cycles. Common use cases include anomaly detection, automated summaries of experiment results, and surfacing unexpected patterns in behavioural data.

Teams use these capabilities to shorten the time between running an experiment and reviewing outcomes. For example, AI might flag segments where results diverge from expectations or summarise key movements across metrics. Product managers and data teams still interpret whether those signals are meaningful, whether results are statistically or practically significant, and whether the evidence is strong enough to act on. AI accelerates insight discovery, but decisions about next steps remain human judgment calls.

Delivery and Iteration

In delivery and iteration, AI support is typically focused on monitoring and insight surfacing rather than directing action. Teams use analytics tools and internal dashboards with AI features to track performance trends, detect regressions, or summarise weekly or monthly changes across key metrics.

For example, AI may highlight a sudden drop in engagement or performance anomalies after a release. Product managers then decide whether the issue warrants immediate intervention, further investigation, or acceptance as a temporary fluctuation. At this stage, AI is least trusted to recommend actions, as decisions often involve tradeoffs between speed, stability, user impact, and long-term direction.

General-purpose copilots across stages

Across all stages of the product lifecycle, many teams rely on general-purpose copilots such as ChatGPT, Claude, and Gemini for synthesis, drafting, ideation, and exploratory analysis. These tools are commonly used to summarise research, draft PRDs or release notes, outline experiment plans, and prepare executive-facing narratives.

They perform best in internal, low-risk workflows, where speed and structure matter more than precision. Teams are cautious about using them for final roadmap commitments, pricing or policy decisions, or unreviewed customer-facing output. In these cases, the cost of missing context or misinterpreting nuance is too high to delegate even partially.

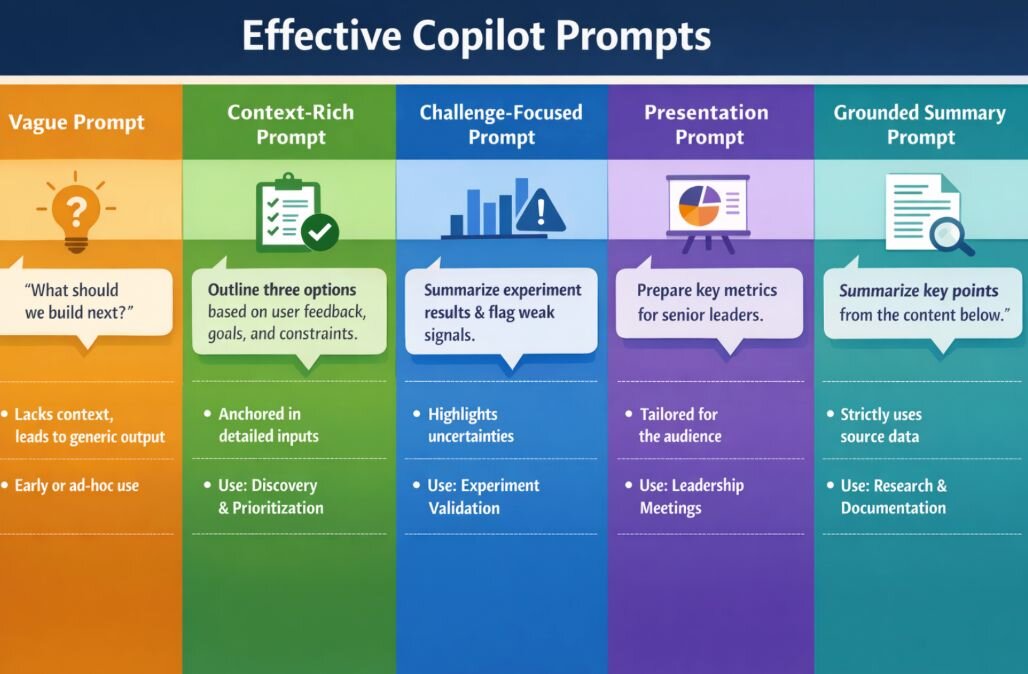

Across teams, prompt quality has a bigger impact than tool choice. Different prompts are useful in different situations.

Vague prompt: “What should we build next?”

Why it fails: It provides no context or constraints, so the copilot fills the gaps with assumptions.

When it appears: Ad-hoc or early AI use, often producing generic output.

Context-rich prompt: “Based on the following user feedback, business goals, and technical constraints, outline three options we could explore next. For each option, describe potential risks and what additional data would be needed before deciding.”

Why it works: It anchors the copilot in known inputs and limits its role to option generation.

When to use it: Discovery and early prioritisation.

Challenge-focused prompt: “Summarise the experiment results, highlight any weak or contradictory signals, and list assumptions that could affect interpretation.”

Why it works: It surfaces uncertainty instead of reinforcing a single narrative.

When to use it: Experiment reviews and validation.

Communication /Presentation prompt: “This dashboard will be presented to senior leaders. Given the project background, audience context, and upcoming decisions, suggest which metrics and visualisations would provide the clearest snapshot of performance, and explain how to present them for impact.”

Why it works: It grounds the copilot in audience and purpose.

When to use it: Leadership reviews, campaign reporting, and decision-readiness meetings.

Grounded summarisation prompt: ”Summarise the key points from the content below. Use only the information provided, flag where evidence is unclear or missing, and do not infer beyond the source material.”

Why it works: It constrains the model to source data and reduces hallucinations.

When to use it: Research synthesis and documentation.

Better prompts matter because they give AI copilots the context and boundaries they need to be useful. Clear prompts specify background, constraints, and the intended use of the output. Without this clarity, copilots tend to produce generic or misleading results. Teams build effective prompts by reusing structures that work, providing consistent context, and reviewing outputs critically. Over time, this repetition improves reliability and reduces the effort needed to interpret or correct results.

In practice, teams improve Copilot's effectiveness through consistent usage patterns over time. This includes reusing prompt templates, providing a standard context pack with goals and constraints, and setting clear expectations that outputs are inputs rather than decisions. Teams that have used copilots for six to twelve months consistently report that shared prompting practices and strong human review norms matter more than switching tools.