The framework used to turn design research into product repositioning that secured enterprise clients and contributed to Series B funding.

When I joined Morressier’s Research Integrity team in 2023, we had a problem that looked like a sales problem. Our Integrity Manager product could detect plagiarism, image manipulation, and citation irregularities across multiple research papers and volumes. The technology worked. Publishers valued it. But deals weren’t closing.

The sales team kept hearing the same feedback: “We love the checks, but what do we do with all these flags?” Publishers would see our demos, get excited about our detection capabilities, then go quiet during procurement conversations.

We were stuck in what I later realized was a commodity trap. Every competitor offered some form of anomaly detection. We were competing on detection comprehensiveness and price. The market had moved past detection as a differentiator.

Then a series of stakeholder interviews revealed something nobody was talking about:

Publishers didn’t actually need better detection. They needed investigation tools.

That insight led to a complete product repositioning that helped us secure clients like Institute of Electrical and Electronics Engineers (IEEE) and the American Chemical Society (ACS), also projecting the team to securing our $16.9M Series B funding, and a finalist position at the 2024 Innovation in Publishing award from the Association of Learned and Professional Society Publishers (ALPSP).

Here’s how design research became product strategy, and what I learned about using research to drive business outcomes.

The real problem wasn’t what we thought

In early 2024, our CEO gathered the product team for a hard conversation. Integrity Manager sales had stalled. We’d spent months improving our detection algorithms, adding more integrity check types, making the dashboard cleaner. Nothing was moving the needle.

The assumption was that we needed better features. More sophisticated checks. Faster processing. Better visualization of data.

I suspected we were focusing on the wrong end of the needle. Working with Leandro Contreras, another product designer on the team, and with backing from our CTO and VP of Product, I proposed a design sprint focused not on improving what we had, but on understanding what publishers actually needed.

The key question we needed to answer was “What happens after something is detected?”

Discovery: Following the investigation trail

We kicked off by digging into client feedback. This wasn’t new research; it was sitting in sales calls, support tickets, and customer success notes. We just hadn’t been looking at it through a strategic lens.

The pattern was clear. Publishers valued our integrity checks, but the checks themselves created problems:

Problem 1: Detection without direction

When our system flagged potential issues, publishers had to manually investigate each one. They’d open the paper, review the flagged section, cross-reference citations, check image data, consult with editors.

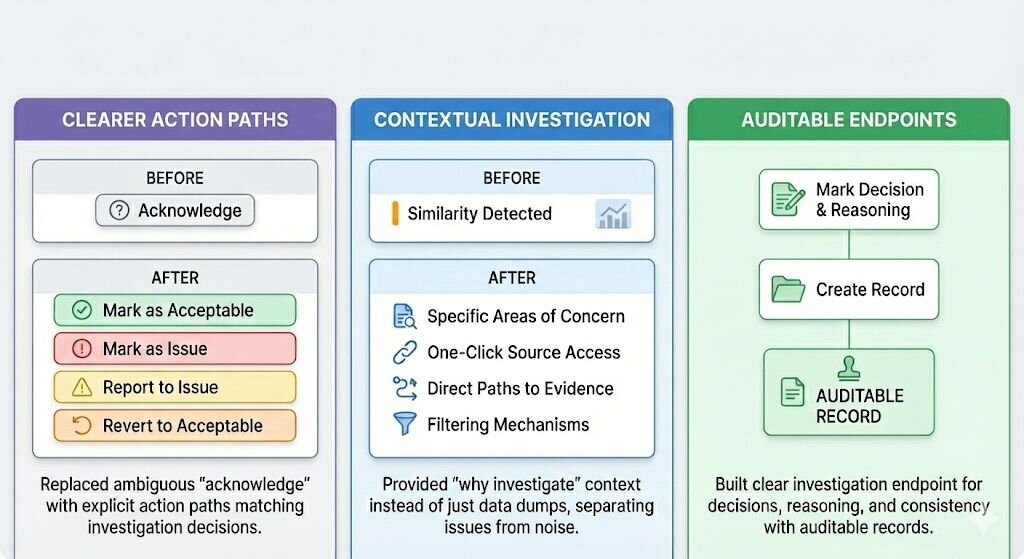

Problem 2: The acknowledge confusion

We had a feature where users could “acknowledge” a check. Seems simple, right? But in user interviews, we discovered this one word was creating massive confusion. Some users thought acknowledging meant “I’m marking this as acceptable.” Others interpreted it as “I’ve seen this issue.” Still others believed it meant “I’m flagging this for further review.”

The same feature meant completely different things to different users. We clearly didn’t understand our user’s mental models

Problem 3: Workflow inefficiency

The manual work of assessing and deciding next steps for each integrity check created significant burden. This became especially problematic when dealing with multiple issues, forcing users to create their own systems for managing investigations outside our platform.

The strategic insight

Every integrity flag/check required them to:

- Determine severity (Is this actually a problem or a false positive?)

- Gather context (What’s the full picture here?)

- Decide on action (Reject the paper? Request revisions? Mark as acceptable?)

- Document decisions (For audit trails and consistency)

- Track progress (Especially when dealing with multiple reviewers)

“Our product stopped at step one. Everything else happened in email threads, spreadsheets, and sticky notes.”

The market gap was investigation management.

Competitive analysis: Validating the opportunity

Before proposing a major strategic shift, I needed to validate that this was actually a market opportunity, not just a feature request.

I analyzed our main competitors: Turnitin’s iThenticate, Paperpal, and other research integrity platforms. Every single one positioned around detection capabilities. “We catch more plagiarism.” “We detect image manipulation.” “We find citation irregularities.”

Nobody was talking about investigation. Nobody was helping publishers manage the process after detection.

This was our blue ocean. The market was commoditized around detection, but wide open for investigation tools.

Blue Oceans: New, unknown market spaces where demand is created, not fought over, offering ample room for rapid, profitable growth.

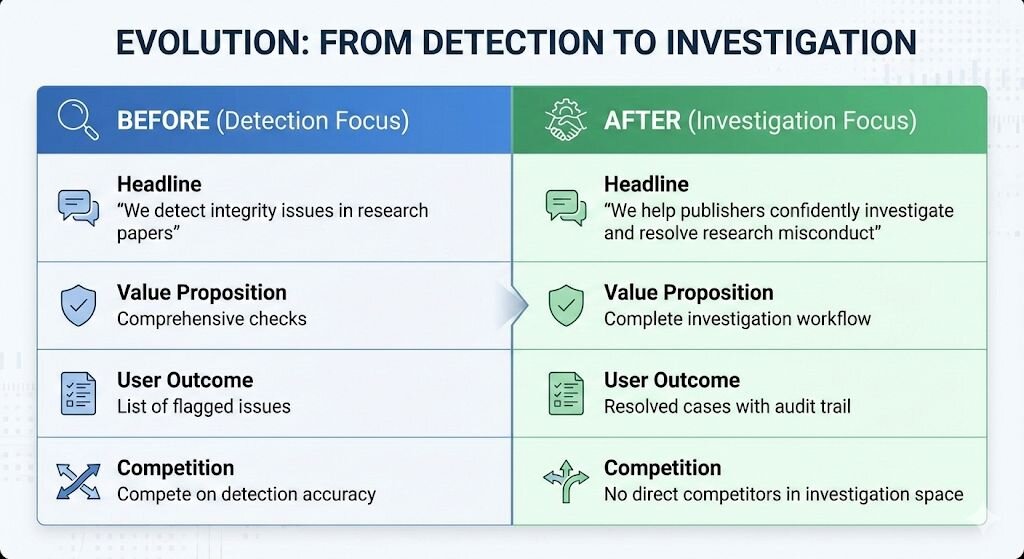

The strategic pivot: From passive to active

With significant research showing what publishers needed and competitive analysis showing market opportunity, we made a strategic decision: transform Integrity Manager from a passive detection tool into an active investigation platform.

The shift looked like this:

This repositioning had implications across the entire product:

Product roadmap: Instead of building more check types, we’d build investigation tools like contextual evidence gathering, decision frameworks, collaboration features, and audit trails.

Go-to-market: Sales conversations would shift from “look at all our checks” to “here’s how you investigate efficiently and confidently.”

Pricing: Investigation tools justify premium pricing because they solve the expensive problem (publisher time and risk), not the commodity problem (detection).

Execution: The design sprint

With strategic direction clear, we needed to figure out what investigation tools actually looked like. We ran a focused design sprint with three phases: discovery, ideation, and validation.

Discovery phase

We dug deeper into the investigation process itself. What does a Research Integrity Officer actually do when they see a flagged issue?

Through stakeholder interviews, we mapped the investigation journey:

- Review the flagged content and system evidence

- Gather additional context (author history, related papers, journal policies)

- Determine severity and appropriate action

- Document the decision with justification

- Communicate with relevant parties (authors, editors, reviewers)

- Track status through resolution

We needed to support the entire workflow.

Ideation & sketching

The team generated solutions for each step of the investigation process. We focused on three key interventions:

Testing prototypes

We tested with two audiences:

- 4 internal team members who knew research integrity deeply

- 2 customer representatives (a Head of Research Integrity and a Peer Review and Research Integrity Manager from a large publishing house)

The feedback was immediate and clear. One Research Integrity Manager told us:

“This is exactly what we need. Right now we’re tracking everything in spreadsheets. Having investigations managed in the same place as detection would save us days every month.”

The internal team provided crucial feedback on edge cases and workflow nuances. The customer representatives validated that we’d correctly understood their investigation process.

By day 4, we had a prototype that fundamentally captured our product’s new value proposition.

The Framework: 5 questions that turn research into strategy

Looking back, the process that took us from “improve detection” to “build investigation platform” followed a pattern I now use for any strategic research initiative.

1. What happens after our product is used?

Most product research asks “How do people use our product?” The strategic question is “What do people do after they use our product?”, “Does our product leave room for avoidable patterns and behaviours?”

2. What are users doing outside our product that could be done inside it?

Look for the spreadsheets, the email threads, the workarounds. These are often strategic opportunities, not just feature requests.

3. What problem are competitors NOT solving?

If everyone is solving the same problem, that problem is commoditized. The strategic opportunity is in the unsolved adjacent problem.

4. What would change our revenue model?

Detection tools compete on price. Investigation tools compete on value. The strategic question is: what would justify 2x or 3x our current pricing?

5. What would we build if we repositioned entirely?

Don’t ask “What features should we add?” Ask “If we were building from scratch for this adjacent problem, what would we build?”

User research answers “What do users need?” Strategic research answers “What market opportunity does this reveal?

”For designers, this is the unlock. You’re already doing research. You’re already uncovering insights. The strategic move is connecting those insights to business outcomes.

You don’t need permission to think strategically. You need to connect what you’re already learning to the questions leadership is asking.

Results

Enterprise Deals Closed

Within months of launching the investigation features, we closed 6 deals with medium and large publishers. The conversation in sales calls had completely shifted. Instead of “why is your detection better than iThenticate?” we were hearing “nobody else helps us manage investigations like this.”

Two of those deals were IEEE (Institute of Electrical and Electronics Engineers) and ACS (American Chemical Society), major enterprise clients who specifically valued the investigation workflow capabilities.

Series B contribution

The product repositioning and enterprise wins contributed to Morressier raising $16.9M in Series B funding. Investors saw that we’d found strategic differentiation in a commoditized market.

Industry recognition

In 2024, we were named a finalist at the Innovation in Publishing award from the Association of Learned and Professional Society Publishers (ALPSP). They specifically recognized how we’d transformed research integrity from detection to investigation.

Product engagement

The clearer action paths and investigation tools increased user engagement with integrity reports through actionable interfaces. Publishers were actually using the product to manage investigations, not just run checks.

Your turn: The 90-day action plan

If you want to start turning design research into product strategy, here’s a practical starting point:

Week 1–2: Audit existing research

Go through the last quarter of user interviews, support tickets, and sales calls. Look for patterns about what users do after using your product, what tools they use alongside yours, and what problems persist despite your product.

Week 3–4: Run competitive analysis

Map what problems competitors are solving vs. not solving. Look for market gaps, not feature gaps. Where is everyone competing (commoditized)? Where is nobody competing (opportunity)?

Week 5–6: Calculate cost of current state

Quantify the business impact of the problem you’ve identified. How much time does it cost users? How much money? How much risk? This becomes your business case.

Week 7–8: Propose strategic initiative

Create a brief that outlines: problem, market opportunity, competitive gap, business case, proposed direction. Share it with product leadership as “strategic research findings.”

Week 9–12: Validate and iterate

Test your strategic hypothesis with users and stakeholders. Build a lightweight prototype if needed. Refine based on feedback.

You don’t need a new role to do strategic work. You need to frame the work you’re already doing in strategic terms.

Start this week. Perfection is the enemy of the achieving.